Founder | Agentic Systems & AI Engineering

PowerLogic Solutions, LLC · 2025 – Present · Remote

- Built launch-stage RAG pipelines, multi-agent frameworks, and AI-integrated workflow systems.

- Developed VantaMap™, ProfDNA™, and the Adaptive Agent Framework™ (AAF™) — proprietary AI products.

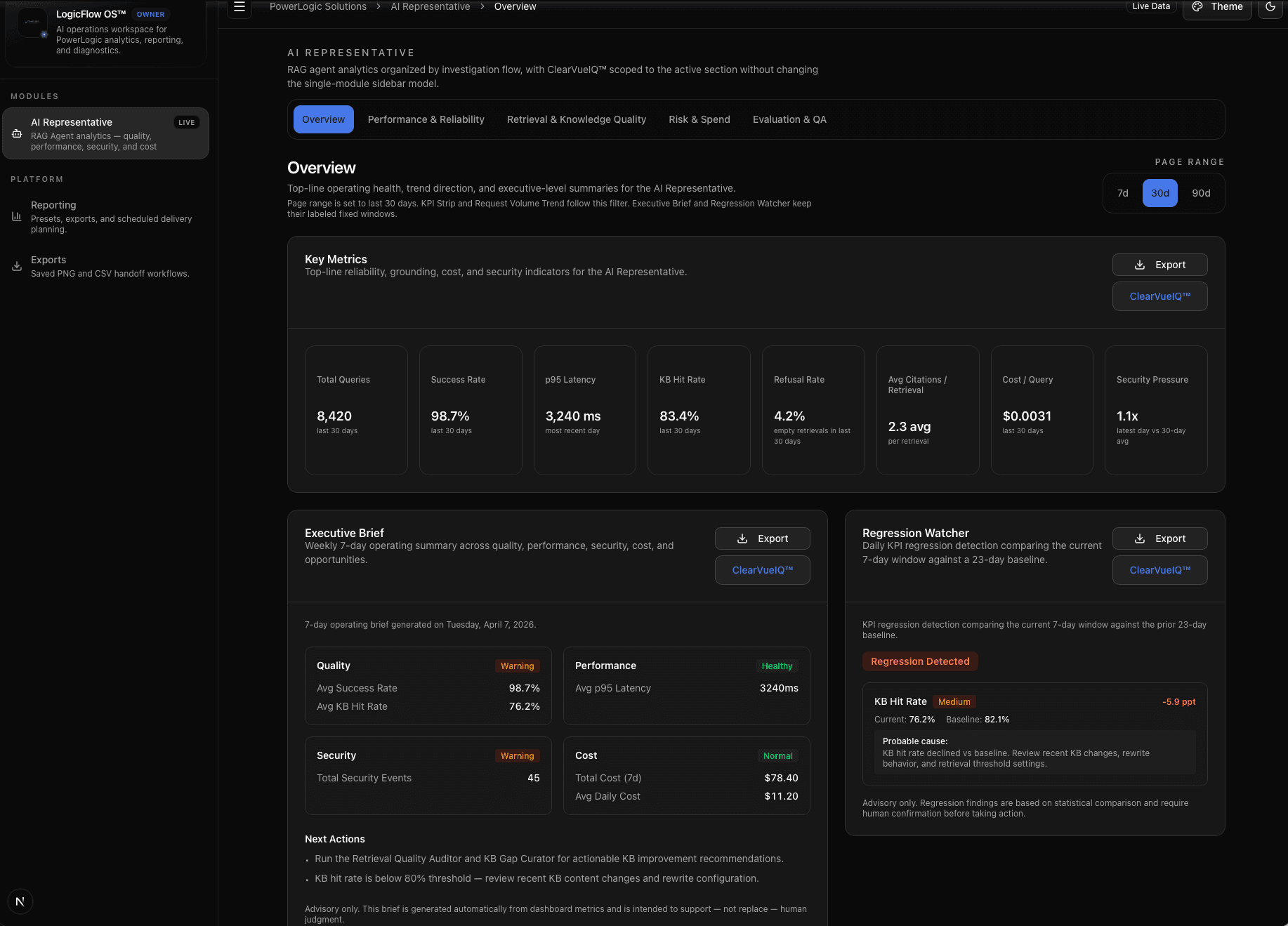

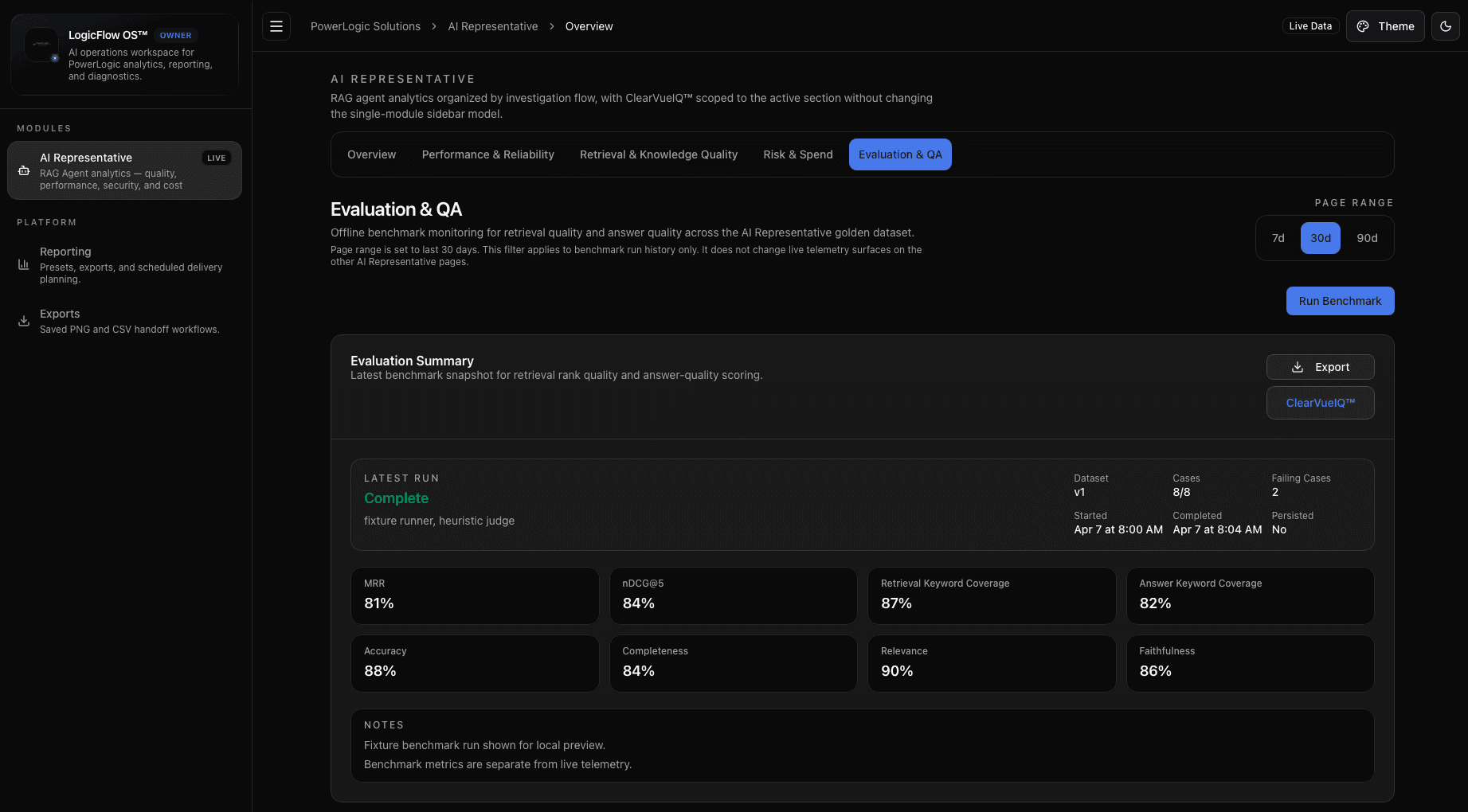

- Designed LogicFlow OS™: an AI agent observability, security, and analytics platform.